Last Tuesday, U.S. News released its Best Colleges rankings for 2025.

On the same day, Sports Illustrated published its top 25 Pennsylvania high school football rankings, and Ranker published its list of the 25+ best movies with a bird name in the title (#1: One Flew Over the Cuckoo's Nest).

This is no mere coincidence.

In this newsletter, I'll be describing how our society came to be deluged by ranking systems. Whether sensible or silly, these systems all emerge from the same historical source.

My focus will be on the U.S. News college rankings. They've been described as deeply flawed, and dismissed as a mere popularity contest or joke. I prefer to think of them as a scam.

I realize that's a harsh assessment, but consider: Scams aren't necessarily lacking in value.

For instance, when I visited Tibet in 2011, Chinese law required that foreigners be accompanied by a guide or tour group. I chose a guide who claimed to be knowledgable but turned out not to be. I still had a meaningful experience.

There's no law requiring anyone to pay attention to the U.S. rankings, but colleges do this because they're anxious about reputation and enrollments and alumni generosity, while prospective students and their families seek to identify the most prestigious schools that are within reach. Meanwhile, like my Tibetan guide, U.S. News claims to do much that it's incapable of doing, but there's still some value in their ranking data. After discussing how that data is created, I'll touch on some alternative approaches that are freely available.

(Semantic note: Whether an institution of higher education calls itself a college, a university, or something else, I'll be using the term "college", since that's the umbrella term U.S. News uses.)

The rise of modern rankings

Ranking systems are as old as our species. By arranging a set of sticks from longest to shortest, a preschooler ranks them according to a single, quantitative variable (i.e., length).

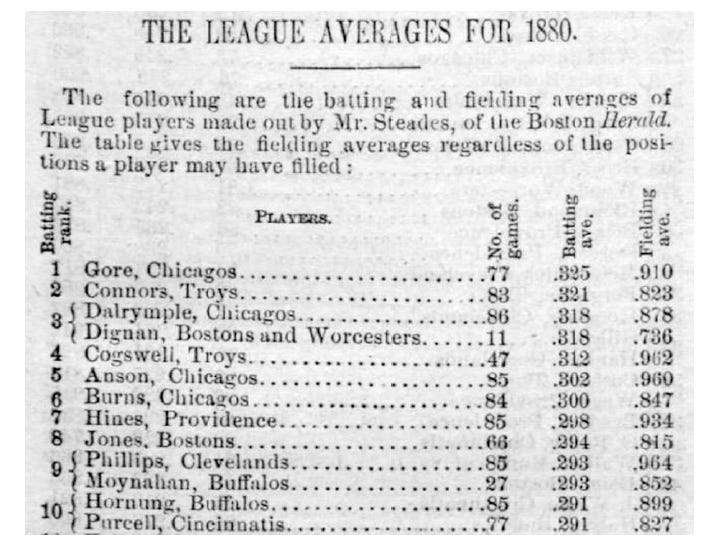

Historically, ranking systems first became popular in the late 19th and early 20th centuries. For instance, check out the table below from the 1881 edition of Beadle's Dime Baseball Player.

I love this table! From a single image, we can suss out much of the story of how our society became saturated by rankings.

You don't need to care about pro baseball to appreciate the table. Just know that it's a list of players from the 1880 season, ranked from highest to lowest in batting average. The numbers on the far left are "Batting Rank". The columns on the right are number of games played, batting average, and fielding average, respectively. (George Gore of the Chicago White Stockings led the league by hitting .325. Seems to have been a pitcher's game back then.)

The sociologists Leopold Ringel and Tobias Warren point out that ranking systems are, in essence, a complex zero-sum game. Whether athletes or colleges are being assessed, as some individuals move up in rank, others necessarily move down.

Ringel and Warren also note that rankings are public and repeatedly aired over time. (U.S. News, for instance, has published its college rankings every year since 1983.)

Think about what was necessary for Beadle's to publish a ranking of batting averages among pro baseball players. A league was needed, of course. Expansion of railroads in the 19th century made it feasible, for the first time, for sports teams from different cities to compete on a regular basis. More importantly, mass media such as magazines and newspapers created a national audience. Late 19th-century sports fans could follow league standings (i.e., rankings) from week to week while sitting at home.

In short, the popularity of ranking systems can be traced to the emergence of high-circulation mass media in the late 19th century.

Here's a more careful way of putting it: People have always made comparisons and decided that one thing is better or worse than another. This is a natural tendency. What's special about the 19th century is how widely and rapidly print technology began to stoke our natural eagerness for rankings.

This brings me to one other characteristic of ranking systems that Ringel and Warren mention: They're visual. Typically this means that they're presented in tables.

Visual presentation is more important than it may sound. Rankings allow for objective comparisons, but when more than a few things are ranked, it would be hard to remember an oral presentation, much less treat it as "official" in some respect. The availability of print technology allows official rankings to be laid out in writing and then revised at the appropriate time.

(I'd speculate this is why, two thousand-plus years ago, Olympic winners were celebrated but the runners-up weren't acknowledged. Nowadays our conception of the Olympics is grounded in the assumption that each event has a winner, a second-place finisher, and so on).

As you know, the historical trend I've touched on here isn't limited to sports. Hotel rankings on Tripadvisor, listicles like "The 10 Worst Nicolas Cage Movies of All Time, Ranked", and a zillion other ranking systems only became possible (and profitable) when print technology allowed them to be shared with large audiences. The internet of course makes the sharing easier.

This brings me to higher education.

Precursors to the U.S. News rankings

(Feel free to skip this section if you're not keen on reading about what prompted U.S. News to develop its ranking system.)

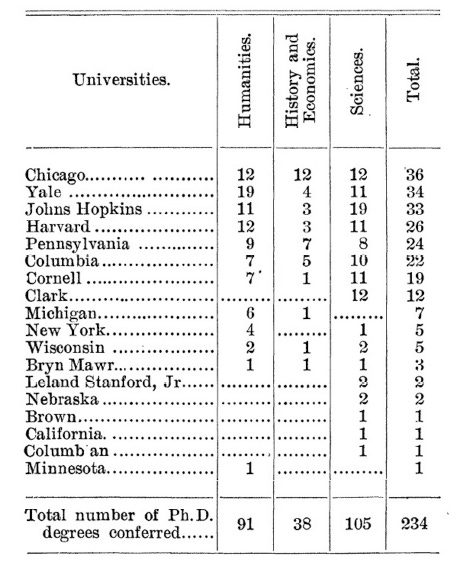

For nearly a century prior to U.S. News's first rankings in 1983, scholars, national organizations, and even federal agencies dabbled with ranking individual programs as well as colleges. For instance, here's James Cattell's 1898 list of American universities arranged by number of doctorates conferred:

(This time it's not a Chicago White Stockings player at the top of the rankings, but University of Chicago, with 36 PhDs.)

There's nothing inherently controversial about Cattell's list, because he ranked colleges on the basis of a single, objectively-defined variable. This is analogous to ranking the finishers in a race. Serious controversies only bubbled up whenever someone attempted to assess academic institutions more broadly.

During the 20th century, a number of changes made it more appealing to create a holistic ranking system for colleges. These include the post-WWII view that the quality of higher education is vital to our national interests, as well as a dramatic spike in enrollments beginning in the 1960s. Individual colleges began to be viewed as performative, or varying in the quality of their performance, and this view was accompanied by a growing pool of applicants eager to benefit from better-performing schools. In short, the particular college one attended, rather than the mere fact of attending college, came to be viewed as instrumental to future success. Hence the need for ranking systems that would offer guidance.

The U.S. News ranking system

In 1983, U.S. News created a more complex, subjective, and ultimately controversial ranking system than, say, a mere list of how many doctorates each college confers.

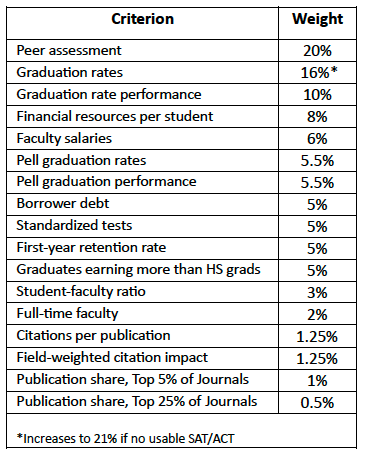

I won't be discussing every detail of how U.S. News generates rankings, but as a reference point I do want to share the 17 criteria currently used for ranking national universities, along with the weights given to each one in determining an institution's rank. This is shown in the table below (if you're curious, each criterion is defined here. I'll be discussing peer assessment and the Pell criteria below.)

Why the U.S. News rankings are controversial

Before I get into specifics, here are two broad reasons the U.S. rankings could never please everyone:

1. Colleges are ranked on a subjective dimension.

U.S. News ranks the "best" colleges. Period. There's no explanation of what "best" means. Indeed, none could be given, because the 17 criteria listed in the table above encompass very different sorts of things, while failing to include some things that most academics consider essential to the mission of higher education. Once the numbers are crunched, each school receives a single number representing their ranking on a dimension that can only be called "bestness". (I'm not being snarky. U.S. News offers no rationale for any other term.)

The "bestness" of anything, whether it's a college or a Taylor Swift album, is subjective. Listing things from best to worst rankles people (no pun intended), including those who disagree with the ordering, as well as those who think that colleges and Taylor Swift albums shouldn't be ranked in the first place.

2. The rankings are based on numerous, subjectively-chosen variables.

If you want to rank colleges in terms of how many PhDs they've conferred, it's clear what needs to be measured: You count PhDs. It's not clear what should be measured when ranking colleges in academic quality, or whatever you associate with "bestness".

U.S. News has modified its rankings over the years, claiming responsivity to surveys and other input from stakeholders. I don't doubt that, but the final decision about what to include or leave out is ultimately a subjective call. I say that on general principle and because U.S. News says very little by way of justifying its methodology. Almost nobody is happy with the 17 criteria that are currently used.

Why the U.S. News rankings are a scam

Now for some evidence for that nasty word I've been using.

U.S. News acknowledges that their rankings are "controversial". I prefer to call them a scam, in part because U.S. News, which ranks many things, including hospitals, best places to live, and water leak detectors, rakes in millions of dollar each year through subscriptions, advertising, and licensing fees that colleges pay to use the U.S. News logo in their marketing. I also use the term "scam" because U.S. News is profiting from methodology that's flawed. Deeply and hopelessly flawed. Here I'll describe just a few of the many problems; if you're interested in a deeper dive, try Colin Diver's 2022 book Breaking Ranks.

1. The reliability problem.

Whenever U.S. News adjusts its ranking methodology, the rankings immediately change. For instance, when U.S. News adjusted its methodology last year, 25% of national universities moved at least 30 places in the rankings compared to the previous year. This lack of consistency is an enormous red flag. Schools typically can't change much in academic quality, or whatever you think of as "bestness", from one year to the next.

2. Some validity problems.

(a) The social mobility issue.

Part of the reason for the shakeup in rankings last year is that criteria that had formerly determined 18% of a school's ranking (undergraduate class sizes, alumni giving, etc.) were discarded, while measures of social mobility increased in prominence.

For instance, as you can see in the table presented earlier, Pell graduation rates and graduation performance together contribute to 11% of a school's ranking. (Pell Grants are federal subsidies to students who demonstrate exceptional financial need.)

I want to digress for a moment to explain what "Pell graduation performance" means, because it illustrates one of the many ways that U.S. News is suspiciously opaque and arbitrary with their statistics. Once I've explained what this means, I'll return to the problem with the way U.S. News uses Pell data.

"Pell graduation performance" is calculated by dividing six-year graduation rates for Pell recipients by comparable rates for all other students. This makes sense – the higher the number, the better Pell recipients are doing compared to peers – but then, with no rationale, U.S. news folds in arbitrary adjustments for the number of Pell recipients at a school. As a result, a school that doesn't do well at graduating Pell recipients might get a fairly good "Pell graduation performance" score simply because they enroll a lot of these students.

I think it's great that U.S. News attempts to evaluate social mobility. At least some people, including me, would say that the "bestness" of a school is partly a reflection of how well it prepares poor students for working their way out of poverty. However, US News's Pell Grant criteria aren't likely to tell us much about actual social mobility:

recipients of Pell Grants aren't the only economically disadvantaged students who attend college (and Pell isn't the only source of support)

simply graduating more Pell recipients doesn't show how well they've been prepared for the workplace, or whether their economic situation actually improves in future years

devoting 11% of a school's ranking to Pell criteria means that other criteria must be reduced in importance or discarded

A striking example of the latter is that as of this year, U.S. News no longer considers first-generation graduation rates. And yet, if you come from a poor family, and you're the first member of that family to attend college, actually graduating from college provides you a significant opportunity for lifting yourself and your family out of poverty. (U.S. News claims that it still supports this criterion "in principle", which is about as useful as saying "I'm not going to loan you that $100 dollars I promised, but I support doing so in principle.")

(b) The peer assessment issue.

The largest single contributor to a college's ranking – 20% – is peer assessment. Here's how U.S. News explains it:

Each year, top academics – presidents, provosts and deans of admissions – rate the academic quality of peer institutions with which they are familiar on a 5-point scale: outstanding (5), strong (4), good (3), adequate (2) or marginal (1). U.S. News takes a two-year weighted average of the ratings. Those who don't know enough about a school to evaluate it fairly are asked to mark "don't know."

I'm willing to assume that administrative leaders are good judges of academic quality, but are they going to judge fairly, or – as has occurred in the past – will they downgrade peer institutions to make their own college look better?

This year, only 30.7% of administrators responded to U.S. News's peer assessment survey. (The figures for national and liberal arts universities were 41% and 47%, respectively.) Sampling bias is likely, but U.S. News offers no details that might allow us to judge. What we need is either a much higher response rate, or assurance that those who did respond were a representative and apparently unbiased sample.

The fundamental problem with the U.S. News peer assessment is that a 5-point scale isn't sensitive enough to capture the academic quality of an entire institution. At minimum we need a scale with more points, along with more questions so that respondents could evaluate academic quality in specific content areas (e.g., STEM), or along specific dimensions (e.g., quality of instruction and student experience). This is a microcosm of the problem with the entire U.S. News enterprise, as it's geared toward reducing large, complex, multifaceted institutions to single numbers.

(c) The teaching and transformation issue.

I think it would be false balance to say that the U.S. News rankings are "controversial". No one in higher education, including those who see value in the rankings, truly believes they capture school-by-school differences in "bestness", whatever one interprets that to mean.

For instance, when we think about what most academics consider essential to the mission of a college, here are some of the fundamental questions that U.S. News doesn't directly address: How well are students taught? How much do students learn? How much more critically reflective do students become? You might disagree about how much responsibility colleges have to produce more tolerant, politically engaged, and ethically responsible students, but high-quality of teaching and learning are clearly central to a college's raison d'être.

(And if you disagree with that noble sentiment, well, notice that U.S. News only uses one criterion for future success in any sense. 5% weight is given to the median earnings of graduates compared to peers who only have a high school degree. The fine print tells us that the rates aren't even calculated for all graduates but simply for federal loan recipients. This is an exceedingly limited indicator of how well the college has prepared its students for career success.)

d) The ethical problem.

A ranking system pits institutions against each other, and so, as you would expect, much effort gets put into gaming the system. I'll spare you the details, other than to say that even colleges that probably don't need to be a few rungs higher on the ladder still try to claw their way up anyway. The most famous recent example is Columbia's 2022 admission of having sent U.S. News inaccurate data on class size and faculty credentials that boosted its earlier ranking.

Are there better alternatives?

U.S. News knows that its rankings aren't widely loved. They hear the complaints, and they roll with minor dissents (e.g., the law schools that decline to cooperate) that haven't quite caught on but may be a harbinger of a full-scale revolt.

One benefit of public frustration about the rankings is that U.S. News has been forced to be careful about how they're framed. For instance, what the home page promises is not just rankings, but "expert advice, rankings and data" to help you "find the best college for you."

I appreciate that U.S. News emphasizes fit now, but they know it's the rankings that keep them in business, because you can find expert advice and data everywhere, for free, but nothing else has the cachet of their rankings.

Should we trust other ranking systems? I think not. These systems, like Forbes, Niche, Wall Street Journal, and The Princeton Review suffer from much the same limitations as the U.S. News approach. The details differ, but in the end they each offer a somewhat arbitrary, subjectively-created recipe for boiling a complex living institution down to a single number. The best I can say about these ranking systems is that each has its own particular strengths that set it apart from the rest. If you're looking for a lot of different kinds of rankings, try U.S. News. If you care most about student perspectives, try the Princeton Review. If you want program-specific rankings, or best value rankings, U.S. News and others offer these too, though they reflect the same kinds of limitations as the overall rankings.

Other approaches to judging schools that I haven't gotten into here include rating them by stars (e.g., Money's 2 to 5 star system) or letter grades (A to F). The advantage of these systems is that by dividing schools into a small number of rating levels, we bypass the silly but hard-to-avoid feeling that the school ranked #25 this year is "better" than the school ranked #26. The problem with all of these systems is that (a) the ratings are still a kind of ranking, and (b) schools are still being reduced to a single score.

The best alternative (but I'm not ranking them)

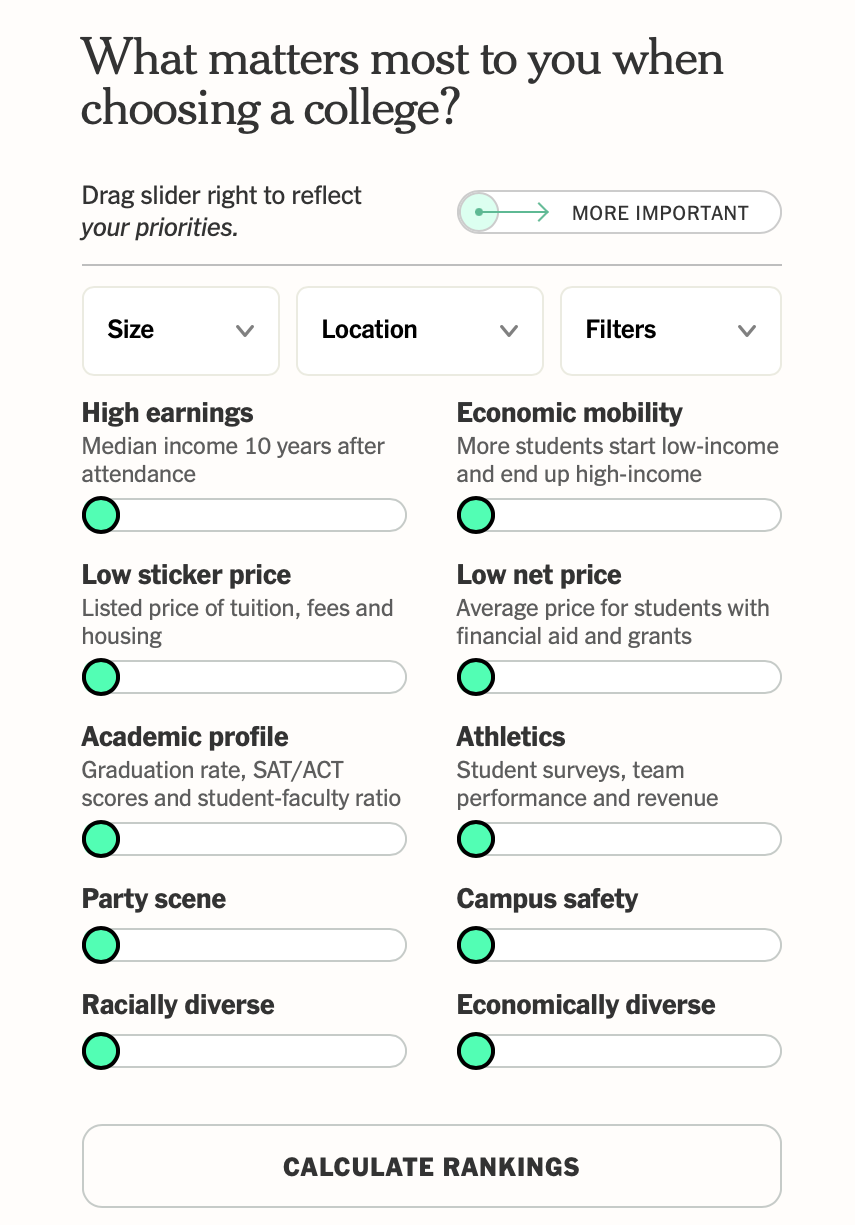

In my opinion, the best ranking system appeared in the New York Times last year. The Times offers a "build your own college rankings" interface, which allows the student to choose their priorities across as many as 13 different criteria. It's easy to use, and by adjusting the criteria, you can create literally thousands of different ranking systems. The filters alone (the button near the upper right) can limit the ranking to public or private, STEM or humanities focus, religious affiliation or secular, HBCU or not, and so on.

The Times' approach doesn't fix all of the problems I've described here – what they call "academic profile" is still an indirect, weak indicator of the academic benefits of college, and "high earnings" (median income 10 years after attendance) is misleading, given that this value depends in part on the affluence of one's family upon entering college. But I think it would be extremely informative for students to play with the 13 options and see how the rankings change. That, in the end, is the most one should hope for from a ranking system: More information.

One more improvement

I had an interesting conversation earlier this week with Daniel Parris, a data scientist and journalist who writes about the intersection of statistics and popular culture on his popular Substack Stat Significant.

I reached out to Mr. Parris because some of his posts illustrate what I would call best practices in creating rankings for complex, highly subjective questions like which colleges are best. For instance, in a recent post, he presented a ranking of the 12 greatest actors in movie history. The approach was great, because (a) a small number of criteria were used (average online ratings, median box office earnings, and number of Oscar nominations), (b) the methodology was completely transparent (the process of choosing criteria and calculating final rankings was fully described), and (c) Mr. Parris framed what he was doing as a means of fostering dialogue rather than revealing the Truth. Here's what he had to say about this post:

"For something like best actor, which is very much like an extension of someone's personal preference, it is kind of silly to rank things...and so I wanted to (a) make the journey more meaningful and engaging, and provide transparency, and then (b) focus on the discourse that is created... A lot of people think that because I'm using statistics, I think the results of that post are etched in stone and is the ground truth, but really it's just another entry into an ongoing dialogue about who's the greatest actor."

Now, imagine that instead of Mr. Parris, a representative from U.S. News had said the same thing about their rankings. Students and other higher education stakeholders would be so much better off, because U.S. News would be acknowledging that their rankings are merely an extension of the organization's "personal" preferences, because they'd have been fully transparent about how the rankings were created, and, most importantly, because they'd be emphasizing that their ultimate goal was simply to promote discussion about the quality of colleges – including the discussions that high school students have with family and friends about where to apply. U.S. News rankings are treated like a finished product, but if they have any value, it's in contributing to a process.

Conclusion

Frank Bruni's 2015 bestseller on the college admissions "mania" is entitled Where You Go is Not Who You'll Be. This is great advice, and Mr. Bruni backs it up in many ways, showing, for instance, that the CEOs of the top 10 Fortune 500 companies (as of 2014) mostly attended good state schools rather than the ivies.

I think the title of the book should've been Where You Go is Not as Important as What You Do Once You Arrive. Most colleges offer enough resources for most students to succeed at the next level, whether that involves a graduate degree, or employment, or something else (assuming that the students are motivated and manage to connect with reasonably supportive faculty and others).

My conclusion is that ranking systems like U.S. News, which offer a one-size-fits-all approach, aren't useful at all, except as a guide to what others are stressing about, and, again, as a spur to deeper conversation about institutional quality and the likely fit between particular students and particular schools.

If my daughter were a senior in high school now, I'd tell her the usual things – think about what you might want from college, research your options, talk to current students and alums, and use the New York Times interface. If she did these things, I literally wouldn't care what the U.S. News rankings were for her preferred schools.

(Ok, to be honest, I'd care a little. But I'd try really, really hard not to.)

Thanks for reading!

Bravo