College Drinking and the Gatsby Effect

10,000 hours of practice will make you an expert. Houseplants detoxify the air. Ozempic keeps people thin. And, according to the new study I'll be discussing, a smartphone app reduces problem drinking among college students.

What do these findings have in common? They all illustrate what I call the Gatsby Effect.

The Gatsby Effect

In The Great Gatsby, the title character appears, seemingly out of nowhere, and dazzles the upper crust of Long Island with extravagant parties at his outsized mansion. As it turns out, Gatsby was a splendid fraud, a "nobody" who'd amassed his fortune via bootlegging and charm.

Jim Gatz, the country boy from North Dakota, was an interesting character, but it was his transformation into Jay Gatsby that draws the novel together. Without Gatsby, we'd be left with a sort of historical document from the Prohibition Era.

In published studies, or in media coverage of those studies, you sometimes encounter a finding that's tremendously appealing. People are impressed; word gets around. But if you look closely, you discover that the finding is misrepresented. Some sort of wrongdoing – weak methods, flawed statistics, biased interpretation – made the deception possible. If you set that finding aside, the original study is still informative. It just doesn't tell a great story.

All of these features, taken together, constitute the Gatsby Effect.

For instance, in his 2008 bestseller Outliers, Malcolm Gladwell popularized the finding that 10,000 hours of practice results in expertise. This quickly became a meme – and prompted scholarly pushback. Many people, including me, have pointed out that Gladwell misrepresented the actual data. (He has recently backpedaled and, to an extent, now misrepresents his own original claims.)

Briefly, the data tell us that many hours of sustained, focused practice, combined with good instruction and other support, leads some people to beome experts. How many hours? Thousands, depending on how you define expertise, but 10,000 is not, as Gladwell claimed, "the magic number of greatness."

What I'm saying here is what Anders Ericsson, the author of the studies that Gladwell drew upon, had been saying all along. The actual data Ericsson and others reported are informative – but not as catchy as Gladwell's glitzy and deceptive 10,000 hour meme.

I've also written about the claim that 10,000 steps make you fit, the finding that houseplants detoxify the air, and the notion that Ozempic promotes weight loss.

Each of these findings illustrates a Gatsby Effect. Much of the Ozempic data, for instance, comes from studies on Ozempic combined with lifestyle changes, so claiming that the drug alone caused weight loss is deceptive. Plus the weight doesn't stay off when people stop taking the drug, and it doesn't work for everyone in the first place. The data are interesting – Ozempic does help some folks lose weight while they take it, especially in conjunction with lifestyle changes – but this is not a magic weight loss pill.

The new study

The study I'll be discussing was published in The BMJ in late August by Nicholas Bertholet at University of Lausanne and colleagues.

The participants were 1,770 students at four Swiss universities. These students had been identified as engaging in unhealthy alcohol use. (The screener used to identify these students is described here.)

Each student was randomly assigned to one of two groups:

–The intervention group was given access to a smartphone app designed to reduce drinking. (See Appendix below for details.)

–The control group did not receive the app and was unaware of its existence.

For each participant, self-reported alcohol consumption was measured at baseline, and then again at 3, 6, and 12 months.

The main analysis focused on number of drinks per week during the past month. Secondary analyses also considered maximum number of drinks on any one day during the month, as well as the number of "heavy" drinking days (5 or more drinks for men; 4 or more drinks for women).

At baseline, both groups were comparable in drinking behavior during the previous month, averaging between 8 and 9 drinks per week, 7.4 drinks for their maximum drinking day, and just over 3.5 heavy drinking days during the month.

The main finding was that on average, the intervention group drank significantly less per week than the control group did at the 3, 6, and 12 month assessments. The intervention group also reported significantly lower maximum numbers of daily drinks at each followup, and significantly fewer heavy drinking days.

In sum, a simple smartphone app seems to have reduced drinking among college students who already drink too much.

Given the prevalence of excessive alcohol consumption among students, along with the many harmful consequences for the drinkers and those around them, the main finding of this study is deeply impressive.

In short, Jay Gatsby has just walked into the room. Let's have a look at where he came from.

Some glitz

Mr. Gatsby tended to make a positive first impression, and so does this study. Here are two apparent strengths:

1. Compliance.

75% of eligible students agreed to participate. 84% of the intervention group actually downloaded the app (and presumably used it). 94% of that group (and, coincidentally, 94% of the control group) completed surveys on alcohol use at the 3, 6, and 12-month marks. Not all intervention studies do so well at getting participants on board and maintaining their participation. Like Gatsby, the researchers must have been persuasive.

2. Measurement.

Although I'll defend it in a moment, the approach to measuring alcohol consumption was hopelessly imprecise. This isn't the researchers' fault but rather inherent to the variable measured.

Here we encounter the usual suspects. Although "drink" was defined for students, there's variability in the ethanol content of any category of alcoholic beverage (e.g., wine), people don't consume the entire contents of each glass or container, they don't necessarily know glass or container sizes, etc. Compounding the problem is that the more people drink, the worse their memory will be for anything that occurred at the time, including the amount they drank. (Asking people how much they drank last month is like asking a child how many shells she gathered at the beach last month. If it was two or three, she might remember the exact number. The more she gathered, the less likely she'd remember exactly.)

In spite of these limitations, the differences between the intervention and control groups were remarkably consistent. In statistical parlance, there was no interaction between group and time. In other words, at 3, 6, and 12 months, the intervention group was drinking less than the control group, and the extent of difference was nearly the same each time. This hints that the approach to measurement was reliable, in spite of the obvious limitations mentioned above.

In fact, this consistency leads us straight to the flawed, deeply misrepresented core findings, where Jay Gatsby turns out to be the far less spectacular Jim Gatz.

Gatsby outed

I'll focus on the main finding – number of drinks per week during the past month – but everything I say here applies to the other measures.

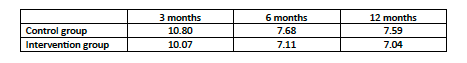

The table below shows the average number of drinks per week reported by each group for the prior month at each followup assessment (3, 6, and 12 months):

Here are two key details in the table:

1. The differences between the two groups are tiny. The largest differences, at 6 and 12 months, correspond to about half a drink per week.

2. The time difference is much larger than the group differences. Specifically, from 3 months to either of the two later time periods, each group reports a decline of about 2 to 3 drinks per week. That's four to six times greater than the differences between each group at any one time.

In short, the main finding here is changes over time in drinking behavior, not differences between the intervention and control groups.

Here's the table again, with the baseline data included:

Now you can see that drinking spiked from baseline to 3 months, then declined to slightly lower than baseline levels and stayed there.

The group differences are almost non-existent. The intervention doesn't seem to work. Rather, the data reflect time-related change, and there's a clear explanation for why:

Baselines assements were made while classes were still being held remotely, owng to the pandemic. The 3-month assessment took place just after Covid lockdown restrictions had been eased. As the researchers put it when explaining the 3-month spike:

"Students may have been eager to take advantage of more easily available alcohol and softer social distancing rules allowing more opportunities to drink than previously. The three month follow-up also coincided with summer, when students are typically on holiday, which may explain the general increases in drinking observed at three months in both groups."

In contrast, the 6- and 12-month assessments took place during the regular semester, after in-person classes had resumed.

Clearly it's inappropriate to present group differences as the main finding, when time had a much greater influence on drinking behavior, and there's a plausible explanation for that influence.

But wait, didn't the intervention have a tiny effect? Weren't the group differences statistically significant?

Yes they were, but it turns out that the researchers cheated. In their analyses, they combined data across the three followup periods, the compared the two groups without including baseline data.

The problem here is technical; the gist is that the researchers inappropriately focused on a subset of their data after inappropriately combining it. I suspect that proper analyses would not have yielded group differences. "Proper" would mean individual-level analyses of drinking behavior over time, starting from baseline, to check whether reductions in drinking tended to occur more extensively at each assessment point among the intervention group.

Pre-registration failure

This was a pre-registered study, meaning that the researchers published their protocol online, prior to data collection. Pre-registration is increasingly practiced (and in some cases required) in part because it prevents "fishing" and other statistical malfeasance. What went wrong here? I looked at the preregistered protocol, and found that (a) the researchers didn't analyze their data the way they promised they would, and (b) the description in the preregistered protocol was pretty vague anyway. (Deception and vagueness...quite Gatsbyesque.)

I'm a fan of pre-registration, as are many, but this study illustrates why it's not foolproof.

Summary

The study I've reviewed here offers a perfect example of a Gatsby Effect:

1. The main finding of the study sounds deeply impressive. A smart phone app is said to reduce drinking among college students who are already heavy drinkers.

2. The main finding is misrepresented. In fact, there's no evidence that the app is effective. There's only evidence that it's not. The culprit here is inappropriate data analysis.

3. The study is interesting without the main finding. The time-related patterns of drinking are remarkably consistent across groups. Although unsurprising, this is useful information.

If I were a mental health professional or university administrator, my takeaway would be that the smart phone app doesn't help (or at least we have no evidence that it helps). On the other hand, the study shows that we can predict fluctuations in student drinking behavior, at least among heavier drinkers, according to the time of year.

There's a ton of research and practical advice for addressing the problem of student overindulgence. Smartphone apps seem like a worthwhile strategy, but they haven't yet been found to be consistently effective. I remain optimistic. Just as Gatsby, when he was still Jim Gatz, did "extraordinarily well" during the war, one can hope that future iterations of these apps do at least moderately well at reducing excessive drinking among young people.

Thanks for reading!

Appendix: The smartphone app

The researchers did not describe their smartphone app in much detail, perhaps for proprietary reasons. All we're told is that it's evidence-based, and that it consists of six modules. From an educational technology perspective, these modules, taken together, seem quite sophisticated. Here they are: