Pandemic Learning Loss: Part 1

The pandemic has kept us focused on the day-to-day. Staying safe. Grieving the victims. Coping with isolation. Enduring Zoom.

At the same time, since the beginning experts have been talking about long-term impacts, the "new normal" we'll be experiencing even after the pandemic has passed.

In this newsletter, I'll be discussing pandemic learning loss – what it is, why it's a problem, and how worried we should be. (Next week I'll talk about solutions.) There's bad news to share as well as good, and stats play a key role in both.

A definition

Although "learning loss" is a common phrase, it doesn't refer to students "losing" (i.e., forgetting) what they've learned. Rather, it means they haven't learned as much as expected.

Expectations for student learning come from historical data. For example, when 3rd graders take a test of reading achievement, their performance will be compared to that of 3rd graders who took the same test in previous years. "Learning loss" might be defined as significantly lower mean or median reading scores than before, meaning that the new cohort of 3rd graders has made less progress in reading than previous cohorts did.

The bad news

During academic year 2020-2021, American students at every grade level made less progress than their peers did in previous years. ("Previous years" often means 2018-2019 or earlier, because many end-of-year tests were cancelled for the 2019-2020 year.)

Two additional findings make this bad news especially bad:

1. Consistency.

Pandemic learning loss has been seen at the national level on tests of reading and math (e.g., MAP Growth and i-Ready assessments), and at the state level on tests of how well students have learned the required curriculum for each grade and class.

The extent of loss varies from test to test, and from state to state, but it has been seen in all states and on virtually all tests. In short, pandemic learning loss is a universal problem. It's not limited to any particular state or test.

2. Group differences.

Pandemic learning loss is greater among economically disadvantaged students, African American students, Hispanic students, and low-performing students. Here too, the findings are consistent across studies, even when statistical methods differ. For example, some studies gather data from individual students and then compare student groups, while other studies compare entire schools labeled as African American, Hispanic, or White, depending on which racial/ethnic group the school primarily serves. Either way, the results are disturbingly consistent (see here and here, for example).

Group differences have been attributed to numerous causes. For example, studies show that economically advantaged/White students have more reliable internet access, more support for on-line learning, more opportunities for face-to-face instruction, and more support for making use of face-to-face options. In short, these groups are advantaged with respect to remote learning as well as conventional face-to-face instruction.

One more thing that makes the bad news bad is the potential stigma associated with references to "pandemic learning loss." Some educators avoid this phrase altogether, because they don't want to label an entire generation of students as damaged. They consider labels like this bad for students, as well as demoralizing for teachers and others who have worked so hard during the pandemic to support their students. And yet, student learning has clearly diminished these past two years. Even if it's perilous to name it, the problem exists, and experts agree that it must continue to be addressed.

Qualifications

I'm not calling this section "good news." I call it "qualifications", because it presents a few reasons why the bad news isn't as bad as it sounds. I don't want to claim that that it's not bad news.

1. The pandemic isn't the problem.

One thing that's screamingly obvious from state-mandated assessment results is that student performance was already problematic, even before the pandemic hit.

For example, in the state of Texas, students are required to take the STAAR tests near the end of each academic year from grades 3 through 8. These tests now cover reading (all grades), math (all grades), science (5th and 8th grades), and social studies (8th grade). Each student's numerical score on each test is labeled as either "masters grade level", "meets grade level", "approaches grade level", or "did not meet grade level". A reasonable goal is for all students to meet grade level, since this label is for students who "have a high likelihood of success in the next grade or course". (In contrast, students who approach grade level are likely to succeed only with "targeted academic intervention.")

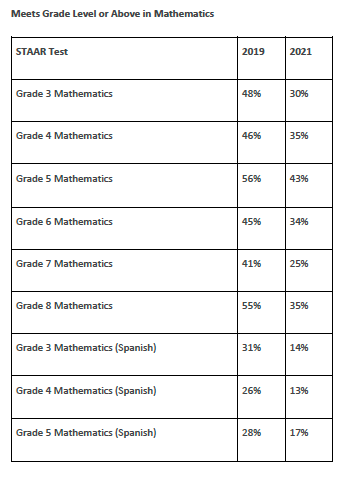

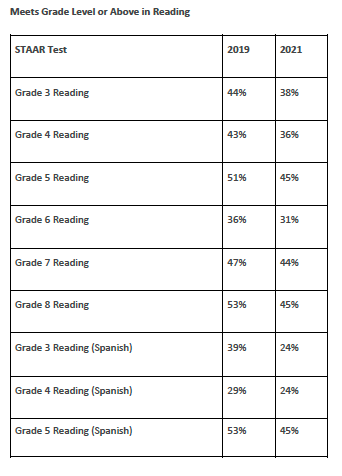

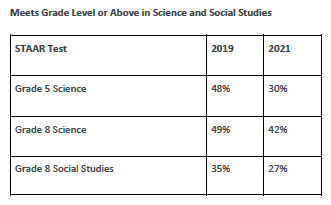

Now take a look at some stats on the percentages of students who met grade level for each grade and subject. The tables below present STAAR data for the 2019 and 2021 tests. (STAAR tests were canceled in 2020.)

Here are two themes evident in these tables:

(a) Learning loss has occurred. The percentages of students performing at grade level were lower in 2021 than in 2019 for every grade and subject. No exceptions.

(b) Performance in 2019 was not strong. Out of all these tests, the best outcome (for grade 5 mathematics) was that only 56% of students met grade level expectations. For most of the tests, less than half of students met the expectations for their grade.

There's nothing particularly unusual about 2019 or about Texas. Pre-2019 data from Texas and other states are similar (although in some subjects, like reading, scores had been trending downward in recent years). Stats like these tell us that even before the pandemic, we should've been either adjusting the expectations we have for our students, or doing a better job of educating them.

In sum, the pandemic isn't the problem. Rather, it has exacerbated an existing problem.

2. Education didn't stop during the pandemic.

Students didn't stop learning when the pandemic hit; they just made less progress. In addition, some experts point out that they learned new things, such as technology use, meal preparation, and resilience. Again, I wouldn't claim that this offsets the bad news. It's just an acknowledgment that learning didn't shut down when schools buildings did.

3. Pandemic learning loss may be overestimated.

Lower test scores may not only reflect less learning. At least some of the decline may stem from the conditions under which students were tested. Depending on state and test, students have had to contend with new testing procedures, such as online formats or test-taking while masked. These changes are distracting and may have contributed to lower scores, thus underestimating students' true progress.

Test performance may also be compromised when testing and instructional formats don't match. For example, consider the MAP Growth assessments, which are used in many states as indicators of progress in reading, math, and other subjects. Here's how Grace Kim, an Evaluation Analyst for the Dallas Independent School District (DISD), summarizes instruction and MAP testing in her district for 2020-2021:

"Beginning in late October, the district announced both in-person and virtual learning throughout the rest of the school year and parents could choose whether their children participated in in-person or virtual instruction. Also, parents could change the method of instruction every nine weeks throughout the year.

Schools encouraged parents who chose virtual instruction to bring their children to school when they administered MAP assessments. Because taking MAP at home was also an option, not all parents brought their children to school."

In other words, at the end of AY 2020-21, MAP test-takers included some students who attended classes in person but took the tests online, as well as some students who were taught online but took the tests in person.

Apart from testing conditions, we should also consider each test-taker's state of mind. The pandemic created more stress than usual for students. They knew people who got sick. They got sick themselves, or at least worried about it. They missed face-to-face interactions with their friends. Maybe a family member got laid off. Meanwhile, taking the usual tests at home for the first time, or at school while wearing a mask and distanced from classmates, served as a constant reminder of what had been causing them stress.

In sum, the true extent of pandemic learning loss may be overestimated, owing to unusual testing conditions, mismatches between testing and instructional formats, and elevated stress.

(To be fair, some experts have suggested the opposite – i.e., learning loss may actually be greater than what the test results tell us – because some students receive assistance on the tests they take at home, and because students who have been least present for instruction may not have participated in the tests. In my view, overestimation is more likely than underestimation, for the reasons I mentioned earlier.)

4. Learning loss can be reversed.

However bad the bad news might seem, we also have numerous evidence-based strategies for helping students catch up. I'll be talking about these strategies next week, including the science, politics, and, of course, the statistics behind them. Stay tuned!