Rapid Test Accuracy

First of all, no, marijuana will not protect you against COVID-19. There was a lot of "buzz" last week about a new study allegedly showing that cannabis prevents COVID-19 infection. However, that's not quite what the study shows (see Appendix A for details). Instead of rolling a joint, you still need the usual precautions: masking, distancing, testing, etc. Which brings me to my topic for this week: The accuracy of at-home rapid COVID-19 tests.

In recent weeks, Americans have been using these tests at a rate of several million per day, and the number will surely increase now that the Biden administration has begun distributing half a billion test kits. It's a blessing to have COVID-19 tests that are rapid, convenient, and free. But are they accurate enough to be helpful?

In this newsletter I'll be using a FAQ format to address some questions people have been asking about rapid testing (as well as some they haven't been asking but perhaps should). In particular, what do accuracy statistics mean? How accurate are current tests? Why has the FDA authorized tests that don't seem very accurate? When and how often should I test? What should I do with the results?

What does "accuracy" mean?

COVID-19 tests have binary outcomes: They tell you either that you're positive (infected) or that you're negative (not infected). The accuracy of a binary test actually consists of two things: sensitivity and specificity.

Sensitivity refers to the percentage of infected people who test positive. If everyone who truly has COVID-19 tests positive, then sensitivity is 100%. If 8% of the people who have COVID-19 test negative, then sensitivity is 92%, and we say that the false negative rate is 8%. (We refer to this kind of mistake as a "false negative" because the test result is falsely claiming that the person is uninfected.)

Specificity is the percentage of uninfected people who test negative. If everyone who truly doesn't have COVID-19 tests negative, then specificity is 100%. If 3% of these uninfected people test positive, then specificity is 97%. In this case, we say that the test has a 3% rate of false positives. (We refer to this kind of mistake as a "false positive" because the test result falsely claims that the person is infected.)

When you take a COVID-19 test, there are four possible outcomes, two related to sensitivity and two related to specificity:

Sensitivity:

1. True negative. (You're not infected, and the test confirmed that.)

2. False negative. (You're infected, but the test missed that.)

Specificity:

1. True positive. (You're infected, and the test confirmed that.)

2. False positive. (You're not infected, but the test claimed that you are.)

Which type of accuracy is most important?

Obviously we want a test to be as sensitive and specific as possible, but is one of these dimensions more important than the other?

People have a tendency to focus on sensitivity, because false negatives promote the spread of the disease. However, the importance of each dimension depends very much on the person. Consider the following (hypothetical) people who felt mildly fatigued and had scratchy throats for the past two days, and who now decide to take a rapid COVID-19 test:

—Ms. A is a financial analyst who works remotely, only socializes online or in open spaces, masks in public, and practices distancing.

—Ms. B is a single mother of three school-age children whose work as a nurse practitioner requires face-to-face interactions and is hourly rather than salaried.

—Mr. C is a teacher whose job requires extensive face-to-face interactions with students. Mr. C's school provides good tech support for online instruction whenever a teacher or student is diagnosed with COVID-19.

—Mr. D is a computer programmer who works remotely, only socializes online, masks in public, and practices distancing. Mr. D's elderly father, who lives with him, never goes out, and he requires Mr. D's assistance with daily routines.

For Ms. A, neither sensitivity nor specificity matters much. A false positive wouldn't call for drastic life changes, while a false negative wouldn't cause her to put others at risk, because her behavior already minimizes the risk of transmission.

For Ms. B, both sensitivity and specificity matter a lot. A false positive might be highly disruptive to her financial well-being and her parenting. A false negative would expose her children and those she works with to the virus.

For Mr. C, sensitivity seems more important than specificity. A false positive would merely require him to work from home temporarily. However, a false negative would put his students and others at his school at risk of infection.

For Mr. D, specificity seems more important than sensitivity. A false negative wouldn't endanger others, because his behavior already minimizes risk of transmission. However, a false positive would call for drastic but unnecessary changes to his father's care. Mr. D would need to quarantine from his father and find outside assistance.

How accurate are at-home tests?

Among the 13 at-home tests that have received FDA Emergency Use Authorization so far, the specificity exceeds 97% in every case, while the sensitivity ranges from 84% to 93.4%. (Links to the data reported by each manufacturer to the FDA are given here.)

Those stats might seem impressive, but we should remember that real-world accuracy will certainly be lower (as studies are beginning to show, particularly for sensitivity). Broadly, there are two reasons for this:

1. Accuracy stats were determined under laboratory conditions.

FDA approval hinged on each manufacturer asking people in their studies to self-test. Although the exact protocol varied from manufacturer to manufacturer, testing conditions were never very realistic. For example, BinaxNOW had people self-test "under the observation and coaching of a clinical site staff member trained as a proctor," while iHealth had people self-test "under the observation of a clinical site staff member trained as a proctor" who presumably also did some coaching and/or correction. Although a few companies (e.g., Flowflex) left people alone, their studies were conducted in "simulated home settings," which means that even if people received no verbal instructions or monitoring (which seems unlikely), they did test in a company lab. A lab environment serves as a constant reminder that you're in a study, and might therefore cause you to be unusually careful about following instructions.

2. Accuracy for self-testing relies in part on completing a task of moderate complexity.

The less-than-perfect accuracy of rapid tests reflects a combination of technological limitations and user error. If you've taken one of these tests already, you can appreciate why the latter might occur. Testing yourself properly is a little more complicated than, say, running a dishwasher. You need the ability to understand the instructions, the willingness to follow those instructions, the patience necessary to complete all steps, and careful attention to the details. Social science researchers treat each of those variables (reading comprehension, compliance, self-control, and attentiveness to detail) as roughly normally distributed. In other words, about half the adult population is below average on each variable, and some people are below average on more than one. Presumably, participants in the manufacturer's studies were either sufficiently competent, and/or they got necessary support from proctors. Not everyone has that much competence or support. Add the occasional distractions of home life to the mix, and you can see that some people are going to make mistakes.

In sum, we can expect real world accuracy to be lower than the reported stats. How much lower will never be known with much precision. Some people who get false positives won't ever realize, much less report, the occurrence of a false positive, because they'll just assume they're asymptomatic, adjust their behavior to protect others, and wait it out (at which point their next test will probably reveal a true negative). Likewise, some people who get false negatives won't realize or report the false negative, because they feel fine and have decided they don't need a second test anytime soon.

Why isn't the accuracy (particularly sensitivity) for approved tests higher?

Crudely speaking, the technology can't do better than it already does, and user error is inevitable.

Specificity above 97%, along with sensitivity above 84%, sound pretty good, until you remember that millions of tests are being taken every day, and the Biden administration just started distributing a half billion tests. Even if the percentage of errors is low, the absolute numbers of false positives and false negatives, if knowable, might be high enough to cause concern. (See Appendix B for more detail.)

Can I choose which test I get from the government?

No. As noted on the official website, "all tests distributed as part of this program are FDA-authorized at-home rapid antigen tests. You will not be able to choose the brand you order as part of this program." I wouldn't be particularly concerned about this. Assuming that you follow test instructions carefully, your chances of testing error are more strongly influenced by the prevalence of COVID-19 than by the small differences between tests in accuracy. (This is explained at the end of Appendix B.)

What's my actual risk of an inaccurate test result?

The answer to this question isn't particularly complicated, but it takes a lot of words to explain, so I've addressed it separately in Appendix B. Long story short, sensitivity and specificity don't convey your actual risk of testing error. As the prevalence of COVID-19 increases, the chances of a false negative increase, while the chances of a false positive decrease.

What should I do?

Besides following test kit instructions carefully, studies suggest that the strongest influence on real-world accuracy is the timing of a test relative to your suspected or actual exposure to infected people, and/or the onset of potential symptoms.

Here are the recommendations from public health experts that make the most sense to me, although they're admittedly very conservative and may call for more testing than you'd like:

1. If you plan be with people you worry about infecting, test yourself as close as possible to the time you'll be meeting them. If possible, test more than once.

2. If you've been exposed to someone with COVID-19, or you think exposure is plausible (e.g., because you've traveled or been at a party), test yourself two or three days after the exposure. (If you test earlier, the viral load may not have increased to the point of generating detectable antigen levels.) If the test is negative, test again up through the sixth day after exposure. (Advice varies as to whether you should test every day or every other day.)

3. If you're experiencing COVID-like symptoms, wait a day before testing, so that if you are infected, the viral load will grow and produce antigen levels more likely to exceed the test's detection limit. If your test is negative, repeat the test a day or two later.

All of the recommendations above serve to increase the sensitivity of testing. There's also a recommendation relevant to specificity:

4. If you test positive, seek confirmation from a PCR test, or at least from another rapid test, but assume in the meantime that you're contagious, because the chances that you've obtained a false positive are low.

All of these recommendations point to the value of serial testing and reflect the assumption that the chances of two consecutive errors are extremely low. Here's an additional recommendation:

5. Assume that some of the people you meet in public are infected. They're out in public because (a) they didn't test, or (b) they've gotten a false negative from an at-home test, or (c) they got a true positive result but don't feel poorly and are assuming it’s fine to mingle.

That's it for this week. Now you can say you studied for your COVID-19 test!

Appendix A: Cannabis and COVID

For the past 10 days, innumerable journalists and social media users have been saying that marijuana affords protection against COVID-19, thanks to a new study, led by pharmaceutical researchers at Oregon State, showing that the marijuana plant (cannabis sativa) contains three compounds that prevent SARS-CoV-2 from entering healthy cells. That's encouraging news, but.... you don't ingest those compounds when you smoke or eat cannabis, because they're changed by the heating necessary to make the plant ingestible. THC-A, for example, binds to the SARS-CoV-2 spike protein and keeps it from infecting cells, but THC-A gets changed to THC when cannabis is processed for consumption, and THC, like everything else in the final product, doesn't bind to the spike protein or do anything else that prevents COVID-19. At best, smoking weed would only help you chill out about the pandemic. (Meanwhile, the researchers' next step will be to determine whether the compounds they identified are effective in animals and people rather than just in test tubes. Stay tuned...)

Appendix B: A closer look at test accuracy

This is a long appendix that dips into some of the weeds of probability statistics. My purpose is to explain how we can estimate the chances of testing error, and to give some context for reported differences in the sensitivity and specificity of at-home tests currently on the market. This is a bit of a slog, so here’s a two-sentence summary if you wish to skip the rest: As the prevalence of COVID-19 increases, your chances of a false negative test will increase, but your chances of a false positive will decrease. The exact probabilities of either type of error can’t be determined, because we don’t know the exactly how many people have COVID-19.

Background

Sensitivity and specificity are properties of tests. In other words, they don't change as the prevalence of a disease changes. However, your chances of getting a false positive or a false negative do depend on the current case rates.

That sounds counterintuitive, and, indeed, a lot of people, including journalists and medical professionals, get it wrong. The temptation is to say that if a test has 91% sensitivity, for example, then the false negative rate is 9%, and if I take the test, I have a 9% chance of getting a false negative. Below I'll be explaining why this is incorrect, and I'll note how the chances of testing error are properly calculated.

Sensitivity and specificity formulas

Let's start with sensitivity – i.e.., how accurately a test detects people who are truly infected. If I say a test has 94% sensitivity, I mean it yielded a positive result for 94% of the infected people who took it, while yielding false negatives for the other 6%. For example: If exactly 100 infected people were tested, 94 would've gotten a true positive, and 6 would've gotten a false negative.

You can see from this example that sensitivity equals the number of true positives divided by the entire group of sick people tested, and the entire group of sick people tested consists of those who get true positives (in this case, 94 people) and those who get false negatives (in this case, 6 people). Thus, the formula for sensitivity is:

Sensitivity = TP/(TP + FN) x 100

(In the preceding example, TP = 94, and FN = 6. We multiply by 100 to convert to percentages.)

Based on similar logic, the formula for specificity is:

Specificity = TN/(TN + FP) x 100

For example, if I test 100 people who don't have COVID-19, and 98 of those people obtain a negative result, while 2 obtain a false positive, then I will conclude that the test’s specificity is 98/(98 + 2) x 100, which corresponds to 98%.

PPV and NPV formulas

When you look at the formulas for sensitivity and specificity, you can see that the stats they generate aren’t affected by the prevalence of disease in the population. But, as we’ll see, these percentages don't translate into individual risk estimates for test error.

If you want to know your chances of getting a false positive, you need to know what percentage of all positive tests are false. Or, to turn it around, you need to know what percentage of all positive tests are true, which you can determine by dividing the total number of true positives by the total number of positives (both true and false). This is called the positive predictive value, or PPV. Here's the formula:

PPV = TP/(TP + FP) x 100

If I test a large sample, and 100 people get a positive result, but I later determine that 2 of those positive results were false, then the PPV would be 98%. (This is not the same as specificity, because we're only considering positive tests.) You can then predict that your chances of getting a false positive are 2%.

On the other hand, if you want to know your chances of getting a false negative, you need to know what percentage of all negative tests are false. This can determined from the negative predictive value, or NPV, which is the percentage of negative results that are true negatives:

NPV = TN/(TN + FN) x 100

The logic here mirrors that of the PPV formula. If I test a large sample of people, and 100 of them get a negative result, but 11 of those 100 people are actually infected, then the NPV would be 89%. (This isn't the same as sensitivity, because only negative tests are considered.) Here you can predict that your chances of getting a false negative are 11%.

A concrete example

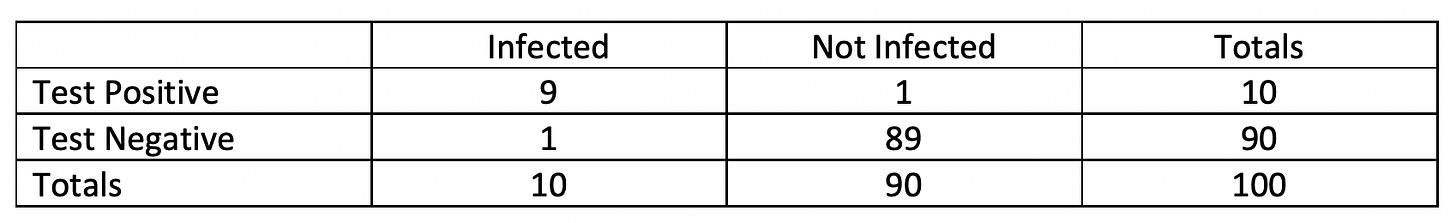

Let's assume that 10% of Americans are currently infected. Let's also assume that we use an at-home test that has 90% sensitivity and 98.8% specificity (which would mean that we're using one of the better tests currently on the market). If we sample 100 people, our data should look like this:

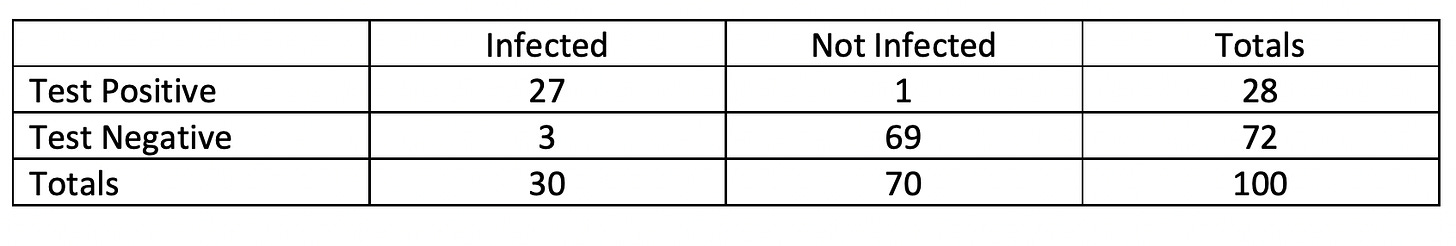

Now imagine a different scenario in which 30% of Americans are currently infected. (We may reach that point soon, but it's hard to know when – or whether we're already there – because case rates are rising but not everyone is reporting positive at-home results.) If we test 100 people using the same test as before, our data should look like this:

Remember that test sensitivity and specificity are the same in each scenario. However, for the first scenario, if we apply the formula PPV = TP/(TP + FP) x 100, we get 9/10 x 100 = 90%. In the second scenario, applying the same formula, we get 27/28 x 100 = 96.4%. This illustrates a general pattern: The higher the case rates in the population, the higher the PPV will be.

Now apply the formula NPV = TN/(TN + FN) x 100 to each scenario. For the first scenario, we get 89/90 x 100 = 98.9%. For the second scenario, 69/72 = 95.8%. This illustrates another general pattern: The higher the case rates in the population, the lower the NPV will be.

General implications

Why is all this important? Well, it's PPV and NPV, rather than specificity and sensitivity, that tell us about the chances of testing error. Specifically, the chance of a false negative is 1 - NPV, while the chance of a false positive is 1 - PPV.

Although in both scenarios above the test had 90% sensitivity, the chance of a false negative in the first scenario is actually only 1 in 90, or about 1.1%. (You can get that value by dividing 1 by 90, or by subtracting the NPV value of 98.9% from 1.) The chances of a false negative increase to 4.2% in the second scenario. In other words, as the incidence of COVID-19 increases, your chances of a false negative increase. Those chances can't be calculated precisely, because we don't know the actual prevalence, but as rates of infection continue to rise, you should expect the chances of a false negative test to go up.

That's the bad news. The good news is that as prevalence increases, the chances of a false positive decrease. You can see this by applying the formula for PPV. Do the math and you'll find that the chances of a false positive in the first scenario are 10%, while the chances in the second scenario are 3.6%. So, even though false positives are always possible, the chances of that kind of error diminish as case rates increase.

Final comment

Good estimates of PPVs and NPVs for the various at-home tests are not available, because the true prevalence of COVID-19 is increasingly unclear, and because the most accurate versions of PPV and NPV stats would use local case rates rather than national trends. All I can say is that given the data reported to the FDA by at-home test manufacturers, it appears that changes in COVID-19 prevalence strongly outweigh differences among tests in determining the risk of testing error. In other words, I don't recommend worrying about which at-home test you take. Although it's tempting to say that you might as well use tests that have the highest sensitivity and specificity, those stats were gathered via protocols that differed from manufacturer to manufacturer, the test methods weren’t very realistic, the samples weren't large, and, again, sensitivity and specificity stats don’t tell you everything you need to know.