Responding to Misinformation

What I love about this Google blog entry is that it was posted on the morning of April 1st. International Fact-Checking Day is actually April 2nd.

Oops.

These days we don't spend much time chuckling about misinformation. We're more likely to be worrying about anti-vaccine myths, stolen election claims, deepfake images, and other widely-disseminanted falsehoods. Misinformation has become such a scourge that the Associated Press website maintains a page called "Not Real News: A look at what didn't happen this week." The page is always full.

Fake news and other sources of misinformation have also become hot topics in social science – at least six new studies were published since early April. In this newsletter I'll be discussing one of those studies, because it offers a fresh perspective on dealing with misinformation. It may also illustrate a common statistical problem called "central tendency bias", a concept worth keeping in mind whenever you hear survey findings being discussed. At the end of the newsletter, I'll circle back briefly to what we can do to reduce the spread and impact of misinformation.

Back-end vs. front-end strategies

"Cocaine kills the corona virus, scientists is shocked to discover that this drug can fight the virus" (From an early-pandemic Facebook post.)

How do you respond when a person says something like that?

In a recent newsletter, I distinguished between what I call back-end versus front-end strategies for combatting misinformation.

"Back-end strategies" are deployed before a person is exposed to false or misleading information. Examples include the activities of fact-checking organizations (Snopes, FactCheck, PolitiFact, SciCheck, etc.), as well as efforts by social media companies to flag if not altogether weed out fake news. In this case, Politifact debunked the statement about cocaine, and Facebook flagged it.

Arguably the most powerful back-end strategies focus on people themselves. A number of studies attest to the benefits of inoculation, which includes everything from the critical thinking and media literacy woven into the K-12 curriculum, to "pre-bunking" apps that introduce students to a small amount of fake news and then unpack how it works – or, in the case of Bad News, allow users to become "fake news tycoons" and learn the strategies first hand. A sufficiently inoculated student might then notice that the Facebook post provided no source, nor a rationale for why cocaine would be effective. Moreover, a Google or ChatGPT search would yield no clue that such a study has ever been conducted.)

"Front-end strategies" kick in at the moment a person engages with false or misleading information. Either the person is on the verge of sharing it, or someone has just shared it with them.

Some front-end strategies focus on communication technology. For instance, in a study published this January, researchers from Yale and USC showed that (a) a small percentage of people are responsible for the majority of misinformation shared on social media, (b) over-sharers are reinforced by likes, comments, new followers, and other platform-related cues, and (c) minor adjustments to these platforms may reduce the amount of misinformation shared. The idea is that simple tweaks to the technology (fact-check buttons, modified algorithms, etc.) might help social media users reflect on what they post and choose not to spread misinformation.

(Unfortunately, in this study, what actually helped was ensuring that people received likes and comments for sharing true information but not when they shared misinformation. This is not a very practical finding, since we can't – and wouldn't want to – control the kinds of feedback people get from other users.)

The front-end strategies we grapple with most often involve talking to people who are clearly misinformed. There's a ton of advice about this, and most of it seems pretty sensible. For instance:

–Don't just talk; listen to what the other person is saying, and make sure they feel heard ("So, you're saying that cocaine kills the coronavirus?")

–Affirm what can be affirmed ("Wow, yeah, if cocaine kills the coronavirus, that would be shocking").

–Ask the other person where they learned what they've just told you.

–Ask if they want to hear your perspective. If they agree, present your views clearly, with sourcing if possible. (In this case, note that you've never heard of such a study, and that it seems impossible the national news media would overlook it, given how shocking it would be.)

–Be tactful, and avoid sounding disparaging or superior.

There's evidence that these conversational strategies can help, but in the end, they may not be effective, because it's hard to avoid the impression that you're correcting a mistake (because, well, you are). People don't like to be corrected, as the authors of the new study point out.

A new study

This study, published in Scientific Reports last month, was conducted by Drs. Christopher Calabrese at Clemson and Dolores Albarracín at the University of Pennsylvania. The study got very little media coverage, perhaps because it's modest in scope, the results seem undramatic, and the authors were careful not to oversell what they found.

In my view, the study rationale and findings are important enough to have merited more attention outside scientific circles, and so I'll dive right in.

Study rationale

Correcting a misinformed person may not be successful; in any case, you may not have the time to try. We have to get along with lots of people whose beliefs not only differ from our own but are demonstrably wrong. This is where it helps to distinguish between a person's specific beliefs about a topic and their overall attitudes toward it.

Think about COVID-19 vaccines for a moment. (I know...you've been thinking about them for three years… I promise I'll be brief.) Some people who dislike the vaccines believe, mistakenly, that the rates of adverse side effects are high. When you talk to such a person, you might not be able to change their core beliefs about vaccine safety, but you may instill a more positive attitude toward vaccination overall if you lay out the personal and public health benefits of getting vaccinated.

Calabrese and Albarracín use the term "bypassing" to describe this front-end process. You're not directly correcting the person's specific belief. Rather, you're changing their overall attitude by introducing an additional belief (or strengthening an existing one). The person still overestimates the incidence of side effects, but they now view vaccination slightly more positively overall, because you've highlighted the benefits.

Bypassing can't do everything. The more extreme the false belief, the less effective this strategy will be. If you meet one of those esteemed citizens who think that COVID-19 vaccines contain tiny robots that alter human DNA, I doubt you could say anything that would instill more positive attitudes toward vaccination.

In short, bypassing is most likely to be effective when a person's overall attitude arises from a set of specific, fairly reasonable beliefs that aren't uniformly positive or negative.

The purpose of Calabrese and Albarracín's study was to determine how strongly the bypassing of false beliefs actually does affect overall attitudes.

Study design

In Calabrese and Albarracín's first experiment, which will be my focus, 360 participants read an article which explained (falsely) that genetically modified corn can cause severe allergic reactions. As misinformation goes, this might seem less consequential than the idea that the 2020 presidential election was stolen, or that vaccines contain tiny robots, but I think it was a worthwhile choice, given how much health-related misinformation we encounter.

After reading the misinformative article, each individual participated in one of three conditions.

–The correction group read an article that directly refuted the misinformation by explaining that the genetically modified corn is harmless.

–The bypassing group read an article that explained how genetically modified corn can alleviate global hunger.

–The control group did not read an article.

Study findings

The researchers' main interest was in overall attitudes toward genetically modified (GM) food policies. Participants used a 5-point scale to rate their likelihood of supporting policies that restrict the manufacturing/production of GM foods. They also indicated how positively or negatively they felt toward such policies. (Actual policy details were not given.)

Calabrese and Albarracín found that that the correction and bypassing groups were less supportive than the control group of restrictive policies. The differences were small but significant. However, the correction and bypassing groups showed no mean differences in attitudes. (Two additional experiments yielded the same findings.)

In other words, if someone mistakenly believes that GM foods are harmful, they will support misguided policies that restrict GM foods. Their support for these policies can be reduced by correcting their belief, or by bypassing it via information on other benefits of GM foods. Correction and bypassing will be equally effective.

What's important about this study is that it points to a way of dealing with misinformation that doesn't require correction. A person who overestimates the health risks of COVID-19 vaccines, for example, might still be induced, via bypassing, to think of vaccination more positively on the whole.

Perhaps this is a bit of a stretch, but one could imagine bypassing having a positive effect on political polarization too. I'll still hate your candidate, thanks in part to some false beliefs I have about them, but I may hate them less if you persuade me of something positive they could do for the country.

Study limitations

In the Discussion section, Calabrese and Albarracín criticize their own study for focusing on the short-term effects of bypassing. They argue that we need to know whether the benefits will endure over time.

I agree this would be helpful to know, but understanding the short-term effects seems useful in its own right. The researchers strike me as overly modest here.

Imagine a social media chat with someone who's ambivalent about getting vaccinated because they mistakenly assume a high rate of nasty side effects. Even if bypassing this belief by discussing vaccine effectiveness only has a temporary effect, the person might still be less likely to post anti-vaccination content, at least for while. I wouldn't underestimate the short-term benefits. Studies show that disinformation travels more quickly and widely than accurate information does, and so anything that squelches disinformation seems valuable.

The one genuine limitation of the study is a statistical one: Any differences between the means are tiny. For instance, participants rated, on a scale from 1 to 5, how likely they would "support policies that restrict the manufacturing/production of genetically modified (GM) foods." Here are the results:

As you can see, the means for the bypassing and correction conditions are nearly identical (2.19 and 2.26, respectively). The mean for the control condition is a little less than half a point higher than the other two. In other words, on average, the control group was more likely to support restrictive GM policies, but by a margin of less than half a point on a 5-point scale.

Central tendency bias

Now let's step back for a moment. Across all of the experiments (especially the second and third ones), every one of the means was close to 3, the midpoint of that 5-point scale. This hints at a problem called "central tendency bias", which is exactly what it sounds like – a tendency to choose the middle value on a rating scale even if it's not how you truly feel.

I can still remember taking some endless, soul-sucking achievement test in 7th grade and just filling in the middle bubble for every question because I wanted so badly to finish, even if it meant that I'd never be allowed to become an 8th grader. Boredom is indeed one reason for central tendency bias, but it's probably not what happened in this particular study.

What if I asked you how much you support policies that restrict the manufacturing or production of GM foods? You'd probably want to know: What policies? What would it mean to support them? (Does "support" mean to simply agree with them? Would I need to speak on their behalf? Donate money?) And does "restrict" mean "limit" or "completely prohibit"?

If you had to answer my question without knowing these details, you might choose the middle value on that 5-point scale, because it's the safest one. If you strayed from the middle value, you wouldn't go far.

Here's another way to put it: Since I don't know what the policies are, what my support for restrictions would entail, or how severe those restrictions would be, choosing a value near the middle of the scale is a "safe" choice, because it's least likely to misrepresent how I'd actually feel if I knew more.

In sum, some participants may have chosen the middle value because the questions were vague. Whenever survey respondents don't fully understand the questions, or they don't want to stand out, or they're bored, or feeling malicious, or in a hurry, they may just choose values at or near the middle of whatever rating scale they're given. For these reasons, central tendency bias can be challenging for survey researchers. (One solution: Exclude any data from people who choose the same option for every question. Calabrese and Albarracín couldn't do this, because they didn't have enough questions.)

Here someone might say: In the first two experiments, the means were slightly below 3. In the third experiment, the means were slightly above 3. Doesn't this prove that everyone was paying attention? Not necessarily. In the first two experiments, the misinformation described relatively minor health impacts (allergic reactions), and so a few participants might've rated their support lower than the middle value because the word "restrict" can be taken to mean "prohibit". In the third experiment, the misinformation pertained to tumors, which may account for the slightly higher ratings. Again, all of the means were close to 3, and the standard deviations were not large.

In sum, this is a good study that offers a fresh perspective on how to contend with misinformation, but the effects of bypassing were very small, and they may have been obscured by central tendency bias.

Bottom line: How can we reduce misinformation?

Ironically, Generation Z, the "digital natives" who grew up with social media, are said to be more skilled than prior generations at spotting fake news and other forms of misinformation, yet they fall prey to it just as readily, given how much information they're exposed to (and how rapid the exposure while plugged in).

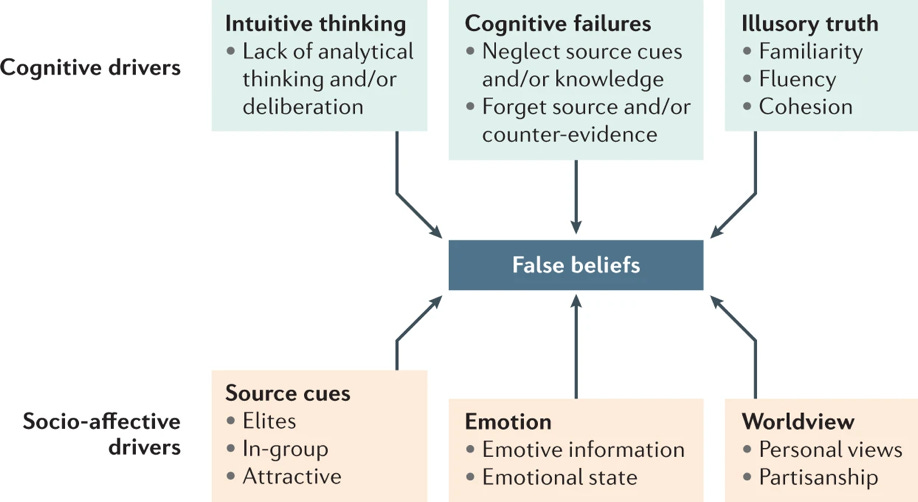

In my opinion misinformation isn't hard to contend with merely because there's so much of it, or because it's so often mixed in with the accurate stuff. What's also challenging is that misinformation stems from so many sources. The figure below, taken from a 2022 review, illustrates the diversity of those sources. The top half of the figure lists cognitive contributors, ranging from faulty reasoning to familiarity effects (the more familiar a statement, the more credible it will seem). On the socio-affective side, we're influenced by variables like the sources of information, our emotional need to believe in it, and our particular world view.

I think a figure like this would be a great starting point for any curriculum or program designed to help combat misinformation. (A few things may be missing, like the incentives for spreading fake news that are inherent to social media platforms, but it does cover a lot of ground.)

Once students understand each of the boxes, the next step would be to discuss what sorts of back- or front-end strategies might work best in each situation. Take that reference to "In-group" in the lower left of the figure. A study of Twitter users, published just a few weeks ago, suggests that conformity plays a key role in the spread of misinformation, in that failing to share fake news diminishes social interactions with other users. Back-end strategies might therefore focus on boosting self-confidence in online settings (e.g., helping students understand that the number of likes they get isn't a reflection of their worth), as well as teaching students how the simple act of sharing information can be influenced by social pressure. Front-end strategies might focus on instilling more reflection at the moment of sharing, including the recognition that when multiple posts convey the same idea, at least some people are just mindlessly copying each other. Adjustments to social media functionalities and algorithms would help too. Meanwhile, bypassing during interactions with a misinformed person can be included as yet one more strategy in what will necessarily be a multi-pronged approach.

Finally, I want to thank Donald Trump bigly for supporting this newsletter. Regardless of your politics, you have to admit that without him there'd be slightly less national dialogue (and research activity) around the topic of misinformation.

Thanks for reading!

Appendix: More on methods

For those of you who enjoy getting a little deeper into the methodological weeds, here are two additional issues.

1. Calabrese and Albarracín did not use a pretest. They presented misinformation about GM foods, followed by condition (correction vs. bypassing vs. nothing), and then measured participant attitudes.

Best practices dictate that attitudes should've been measured at the beginning and end of the experiment, owing to the possibility of pre-existing differences between the groups.

However, I'd argue that the absence of a pretest isn't deeply concerning here, because participants were randomly assigned to groups, and it seems unlikely that in three experiments involving three different samples, the groups would have the same pre-existing differences that affect the results in the same way. It's possible, but unlikely.

2. Earlier I commented on the tiny differences between group means. I'm surprised that these differences weren't greater, owing to the possibility of demand characteristics.

A demand characteristic is anything about a study that makes participants feel (rightly or wrongly) that they know what the researcher hopes they'll do. Demand characteristics are undesirable, because they lead some people to attempt to please (or, less commonly, displease) the researcher, rather than responding sincerely.

In this study, after reading an article on the harmfulness of GM foods, participants in the correction and bypassing groups viewed articles presenting GM foods in a more favorable light. Afterwards, they were asked about their attitudes toward GM food policies. You might expect at least some participants to infer that the correction or bypassing articles were meant to elicit more positive attitudes, so that a few of them responded more positively than they truly felt.

We're on the edge of a rabbit hole here, because other participants might've assumed that the researchers were checking on how well they remembered the original article and would remain faithful to what it said, in which case these participants might respond less positively than they truly felt. Other possibilities are imaginable too.

In the end, we shouldn't have to speculate. Demand characteristics could have been minimized in a number of ways, such as asking questions in between presentation of the misinformative article and the corrective or bypassing one, and then again right before administering the attitude measures. These intervening questions would be ones the researchers ask anyway, but they could be strategically placed, so that participants would be less likely to make connections between different elements of the experiment and come up with guesses about what the researchers hoped to find.