Your Voice, Your Heart

Imagine a computer that could detect heart disease merely by listening your voice. Sounds like science fiction, right? It's actually just science. Recent studies, led by Mayo Clinic researchers, show that an AI-based algorithm can reliably identify cardiovascular problems from audio recordings of people talking. The study I'll be discussing focuses on pulmonary hypertension (high blood pressure in lung arteries).

Why is research like this a big deal?

1. Pulmonary hypertension (PH) is typically present when heart failure occurs, and heart failure leads to approximately 1 million hospitalizations per year in the U.S. It's the leading cause of hospitalization for people over 65.

2. Diagnoses of PH currently require patients to be physically present for testing. Studies like this may expand our growing list of options for remote health care, or telemedicine. (Voice analysis algorithms, for example, are currently being developed to screen remotely for other conditions, such as Parkinson's disease and COVID-19.)

3. There's something fishy here. (I'll get to that at the end of this newsletter; for the moment, I hope it sounds mysterious.)

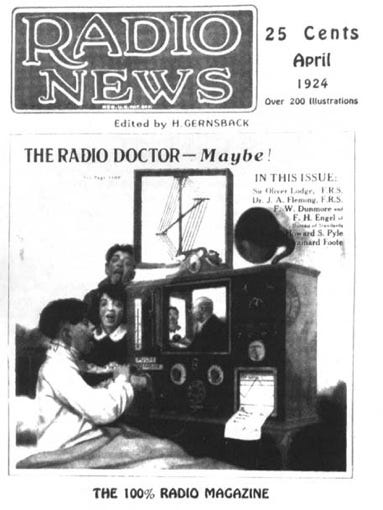

Telemedicine has been around for a while. In the 1920's, medical treatment via radio was already widely envisioned (see the April 1924 magazine cover below). Over the past six decades, technology has been increasingly used to connect patients with health care providers. And, as you know, telemedicine spiked during the pandemic, not always by choice.

(Photo from the Radiology Information System Consortium.)

Most applications of telemedicine are widely appreciated. Using a laptop or phone to request refills, view test results, and ask questions are among the advantages of 21st century health care. At the same time, many patients – and physicians – have reservations about remote care. (How many is "many"? That's hard to say, because the stats vary a lot from survey to survey. As far as I can tell, when survey questions are comprehensive enough, well over half of respondents express concerns, primarily about quality of care, but also in some cases about privacy, or about the challenges of maintaining a good "webside" manner.)

This newsletter deals with a quality of care issue. Specifically, I want to discuss a 2020 Mayo Clinic study published in the prominent journal PLoS One. The misuses of statistics in this study are interesting – and important, because computer-based voice analysis may someday become an established method of screening for certain kinds of heart disease. Whether these screenings are routine or presented as an option, patients need to know how sensitive and specific they are. (And, if there's anything "fishy" about the research, we need to know that too.)

Sensitivity and specificity

We all care deeply about sensitivity and specificity, even if we don't use those particular terms.

In the context of medical screening, sensitivity refers to the percentage of time a screening procedure correctly detects an existing problem. Specificity refers to the percentage of time the procedure correctly indicates no problem.

We care about these things because a test with low sensitivity might allow us to walk around with an undiagnosed medical problem, while a test with low specificity might cause us to worry about, and seek treatment for, a medical problem we don't actually have.

Overview of study

Participants in this study consisted of 83 patients (average age 61.6 years) currently being treated for heart disease. Each patient used their own smart phone to make three 30-second recordings of themselves reading a text, describing a positive emotional experience, and describing a negative emotional experience. The main finding was that computer analyses of the voice samples predicted which participants had higher vs. lower pulmonary hypertension (PH).

At this point, you're probably wondering: What was it about patients' voices that revealed their PH levels? I'm wondering too. Because here we encounter a lack of information that often crops up in research on new technologies. Researchers tend be vague about key details if they're concerned about others profiting from their technology, and, given the rates of cardiovascular disease in the U.S., this particular technology does look like a potential moneymaker.

Perhaps there were other reasons for the researchers' vagueness. In any case, we know at least that an AI-based program was used to extract 223 acoustic features from each patient's audio recordings. These features were combined to create a single score, a so-called "vocal biomarker", that predicted PH levels. The researchers tell us almost nothing about which 233 features were extracted or how they were combined, nor do their citations provide much clarification. All we know is that the 233 features pertain to familiar acoustic properties such as pitch, loudness, jitter (variations in pitch), and shimmer (variations in loudness).

Results and evaluation

Lack of methodological detail wouldn't be a problem if the program genuinely works. Indeed, patients with higher PH levels had significantly higher vocal biomarker scores than patients with lower PH levels (p = .046). This is a promising result, but don't be too impressed just yet.

1. PH levels and age may have been confounded.

In this study, a "high" PH level was defined as pulmonary arterial pressure of 35mmHG or greater, because, according to the researchers, this is a conventional cut-off for moderately high PH. The main statistical analyses focused on comparing two groups: high vs. low PH levels.

Unfortunately, the high PH group was 5.6 years older on average than the low PH group (65.4 years vs. 59.8 years, respectively), a difference that was nearly significant (p < 0.154). Studies show that our voices change with age, and so it's possible that the AI program used in this study was simply distinguishing between older vs. younger participants. In technical terms, PH levels may have been confounded with age.

The researchers noted several times that their results were obtained after "adjusting for age", but they never explained what that adjustment was. This is a problem, because many sorts of adjustments are possible, but none of them are proprietary or otherwise need to be kept secret. They're just routine statistical procedures and, in most peer-reviewed studies, they're identified. I e-mailed the lead author to ask for details but haven't received a response yet. Meanwhile, owing to small sample size, any sort of adjustment would yield analyses with limited statistical power.

2. The generalizability of the findings is limited.

Small sample size, as well as the restriction of the sample to patients who already had heart disease, limit the generalizability of the results.

With respect to specificity, we can't tell how the AI program would perform with people whose PH levels are higher than normal, but not as high as the "low" group in the present study. It's not clear whether the program is specific enough to identify that in-between group.

With respect to sensitivity, we don't know how many healthy people the program would mislabel as having elevated PH levels.

(If the researchers had treated PH level as a continuous variable, we might have data that addresses these questions. For example, a strong correlation between vocal biomarker score and PH level would show us that as vocal biomarker score increases, PH levels increase. That would allow clinicians to know when they should start to worry. However, the researchers just compared two groups, high vs. low PH, so we can't see that linear relationship.)

4. Natural variability in speech patterns is not adequately accounted for.

When you talk, your speech patterns vary depending on the topic, your mood, your energy level, what's happening around you, and so on. This study assumes that something about how you speak remains constant across these different situations, and that these constant features can provide clues about your cardiovascular health.

The researchers claimed that they found such constancy. Specifically, they calculated something called an intra-class correlation coefficient (ICC), which tells you the overall extent of similarity across each patient's three voice samples. That coefficient was 0.829 (out of a maximum value of 1.0).

By convention, an ICC of 0.829 is considered "good" (while values above 0.90 are considered "excellent"), but in this particular study, we can't use such descriptors. Here, there's not enough information to judge the clinical implications of that less-than-perfect coefficient. It's impossible to tell how often the AI program would be misled, simply because the individual's voice sample was unusual (e.g., because they didn't have enough coffee that morning, or they had too much coffee, or they were distracted, or whatever).

The fishy part

In this study, the researchers identified their AI program three times. Twice they referred to it as an application being developed by "Vocalis" (with the word in quotation marks). Once they referred to it as ""Vocalis's" clinical trial application" (with "Vocalis's" italicized and in quotations). This use of quotations is unusual.

What exactly is Vocalis? Evidently it was a start-up company called Vocalis Health. I say "was" because the company apparently no longer exists. Their website is suspended, and the only available phone numbers I could find either didn't work or simply rang without going to voice mail.

Meanwhile, the lead author and colleagues now have three small studies either published or in press using the Vocalis program to identify three different cardiovascular-related outcomes. Each of these studies has the methodological limitations I described here, and more. (See here for some experts' concerns about one of the studies, including uncertainty about what the vocal biomarker truly measures.)

I'm not accusing the researchers of anything but flawed statistics. By "fishy" I don't mean to imply misconduct. Rather, I'm referring to several unanswered questions we ought to have answers to. For example:

1. Why the strange reference to "Vocalis" in the study I discussed, instead of just naming the company (Vocalis Health) as ordinarily done in scientific articles? A Google search indicates that Vocalis Health was still in business at the time this study was published.

2. Why the lack of information about statistical procedures, including adjustments for variables such as age, and why the apparent reluctance to run the analyses of greatest relevance (e.g., treating PH levels as a continuous variable)?

3. Why the piecemeal approach from study to study, each addressing a different research question? This isn't an unusual way to do research, but given that other experts have repeatedly raised questions about what the AI program measures, and given that the program is clearly intended for use in clinical settings someday, why do the researchers keep sacrificing depth for breadth? If the houses you've built have floors that keep caving in, the one thing you shouldn't do is build the same houses somewhere else.

Conclusion

The history of medicine is littered with examples of accepted practices that turned out to be bad ideas. Think of bloodletting. Heroin for children (to treat coughing). And, most concerning, practices that are both widespread and legitimized (e.g., through FDA approval) yet may be less than ideal. Most of us aren't medical experts, and so we're not in a position to directly question the legitimacy of these practices. In the case of this study, however, it doesn't take much knowledge about statistics to raise questions and identify problems. At the moment, in spite of the excitement many of us share about new health care technologies, I would be concerned about the use of voice analysis algorithms to screen for cardiovascular problems.