Creativity, Part I

What can you do with a brick?

Take 5 minutes to list as many creative uses for a brick you can think of, and you'll have completed an "alternative uses" task.

This is a fairly common approach to measuring creativity. You can see it in research studies, like one on marijuana and creativity I'll be discussing next week. Some companies use it to test the creativity of job applicants. Students routinely complete tests like this to qualify for gifted programs.

We might ask though: What exactly do these tests measure, and why should we measure it?

The second question seems easier than the first one. In the words of two leading creativity researchers: "The psychological study of creativity is essential to human progress [if] strides are to be made in the sciences, humanities, and art..."

That's a strong statement. Perhaps too strong. Systematic research on creativity didn't begin until the 1950s. Prior to that, creative people drove human progress quite nicely. (Shakespeare didn't subscribe to the Creativity Research Journal. Neither did Einstein.)

The statement is also missing a few things. Business experts also consider creativity essential for entrepreneurship, organizational growth, and effective leadership. Educators and parents perceive a need to boost children's creativity. And, whether you're an executive, a teacher, or an anxious parent, there are lots of creativity-enhancing products and services for sale, not to mention a zillion free web pages, blog posts, podcasts, YouTube videos, etc.

In short, many people, for many different reasons, stress the importance of measuring and enhancing creativity. But what is it exactly that gets measured?

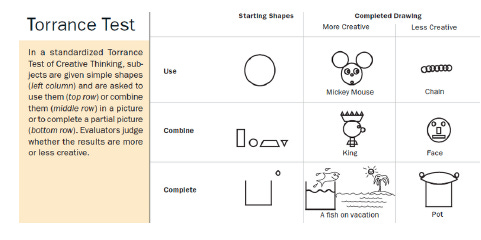

You can see we've already covered a lot of ground. Listing things one can do with a brick seems quite different from the kinds of creativity that are essential to entrepreneurial success. And, apparently, yet another skill set must be deployed for the following items from the most widely used test of creativity, the Torrance Tests of Creative Thinking (TTCT):

There's much more to the TTCT than this, but regardless, items like this might make you wonder whether something we call "creativity" can represented by numerical scores. More on that shortly.

Statistical complacency

This two-part newsletter was prompted by a new study (which I'll discuss next week) looking at whether marijuana can enhance creativity. It's a cleverly done study, and, like others of its kind, it illustrates that statistics is a powerful tool whose power can be misused.

Familiarity breeds complacency. Over the past century (and for the first time in history,) tossing around statistical factoids has become easy – so easy that the numbers sometimes get cited carelessly. For instance, in the New York Times last week, David Brooks, commenting on the strength of American capitalism, noted that he was

"especially struck by how much America invests in its own people. America spends roughly 37 percent more per student on schooling than the average for the Organization for Economic Cooperation and Development [OECD], a collection of mostly rich peer nations."

The kindest interpretation of this passage is that it's lazy. Of course we spend a lot per student. We spend more on just about everything, because we're the richest country in the world. However, compared to other OECD countries, we're actually below average in the percentage of gross domestic product (GDP) spent on K-12 education. In other words, we have a lot more money, but we spend proportionally less of it on educating children. (And, as explained in the Appendix, we tend to get less for our money.)

Misrepresentations like this are common and illustrate the need for caution around statistical data. As for creativity, the problem is that ever since the first systematic explorations of the 1950s, it has been treated as a statistical construct, and some researchers have grown complacent in the assumption that it can be captured by test scores.

In order to explain what I mean by that, I need to describe what creativity is and where it might come from.

A brief definition of creativity

Psychologists haven't reached consensus yet on an exact definition, but they do agree, at least, that for something to be called creative, it needs to be both novel and useful.

For instance, if I ask what you can do with a bunch of bricks, and you reply "build a brick house", that wouldn't be considered very creative. What you've proposed is useful, but not at all novel.

On the other hand, if your answer is "I could line the bricks up, with exactly six inches of space between them, and give each one a name containing three vowels", that would be very novel, but not useful, and so it would not be considered creative. (Context is essential though. If you were a professional artist, and your arrangement of named bricks was recognized as having aesthetic value, then, sure, a researcher would deem your work creative.)

Where does creativity come from?

Once upon a time, what we now call creative expression – at least in poetry – was viewed as a gift from the gods, and poets were like Amazon drivers.

In other words, poets themselves didn't create the substance of their art. They merely delivered it. They were the vessels, or conduits, for divine messages. This is Homer, for example, asking the muse to sing of Achilles' anger, or to tell of the adventures of Odysseus. It was the muse, not Homer, who supposedly did the singing and telling.

In the west, it wasn't until the Renaissance that poets and other artists were widely considered to be the ultimate source of their art. They may rely on muses, other artists, a beloved person, nature, and/or drugs for inspiration, but what we would call their creative output was assumed to come from some murky internal process.

Contemporary views

Humans seem to be creative about everything, including the sources of creativity. In the 20th century, a new perspective emerged, as psychologists began to view creativity as a mental capacity, one that can be measured and quantitatively described, just like intelligence, memory, attention span, problem solving, etc. Since the earliest systematic treatment by J. P. Guilford in 1950, creativity has been studied from the same perspective (and, often, by the same people) responsible for IQ tests, college admissions tests, K-12 achievement tests, etc. Statistically speaking, these tests represent the same family. And, like IQ, aptitude, academic achievement, and all the rest, creativity itself is a statistical construct.

What does that mean exactly? Crudely speaking, researchers identify creativity in two ways. Both are statistical at the core.

1. One approach is to simply look at scores on creativity tests. The higher the score, the more creative the person. In a sense, that's fine – the scores can help you predict, say, who's better or worse at brainstorming – but it's totally agnostic as to the nature of creativity and the creative process. For all you know, a person may have listed 57 wonderfully creative uses for a brick in three minutes because the muse was secretly coaching them.

2. A second approach is to view creativity as some sort of mental capacity. Creativity has been treated as a form of intelligence, a skill, a personality trait, and a kind of aberrance, and in most cases it's assumed to have subcomponents. For example, following Guilford, most theorists distinguish between fluency and divergence.

Fluency refers to how quickly you can generate novel ideas. The more uses of a brick you can list in three minutes, the greater your fluency.

Divergence refers to how distinct each idea is from the rest. If you note, very quickly, that a brick can be used to break a window, break a door, break a flowerpot, break a dish, and break a chair, then your fluency is good but your ideas aren't very divergent. (Also, you may have anger management issues.)

The way that researchers tap into fluency, divergence, and other subcomponents of creativity is to administer tests and infer the subcomponents from peoples' responses. For example, if each person tends to respond the same way to items that measure divergence, but the way they respond to other kinds of items is different, then divergent thinking is assumed to be a "thing"– i.e., some sort of distinct capacity that exists somewhere in our brains and varies in strength from person to person. (If you've been trained in stats, you've can see that I'm referring to factor analysis and other approaches to identifying latent variables.)

Has this kind of statistical approach helped us understand the nature of creativity?

I would argue no, not much. Saying that the Odyssey consists of words delivered by a muse leaves things pretty mysterious. So does using statistical analyses to infer capacities we call "fluent thinking", "divergent thinking", "original thinking", etc. We're not actually looking into peoples' brains and seeing these things. They are, in essence, statistical inferences. They might as well be muses.

In my opinion, what you see is what you get. A person who can list a lot of novel, distinct uses for a brick can probably do the same thing for a paperclip or a chair. You might infer that the person is good at brainstorming, or thinking outside the box, or whatever. These are desirable qualities. But creativity test scores won't tell you who will be a prominent artist, or scientist, or entrepreneur. Researchers haven't figured that out yet (though they've tried) because so much else contributes to these outcomes. At most the research has only revealed moderate correlations between scores on creativity tests and real-life outcomes (like the ability to brainstorm in a limited range of situations).

In short, there's a ton of research on creativity, but much of it taps into a narrow, fundamentally statistical conception of creative abilities and products.

A separate set of problems arises from evidence that creativity tests are culturally-biased, provide mere snapshots of what individuals can do, and fluctuate way too much as a result of minor changes to instructions and other details of the test questions.

So, should we conclude that some prospective employees, as well as children being screened for gifted programs, have been misrepresented by their creativity test scores? Next week I'll say more about this. For now, I'll just close with some comments on the connection between the measurement of creativity and a concern, among some, that artificial intelligence is becoming more creative than its human creators.

Final thoughts: Creativity and AI

Creativity, imaginativeness, inventiveness – whatever you wish to call it (assuming these terms are synonymous) – has always been mysterious. It may not be easy to improve existing measures. Perhaps nothing would help. Perhaps there are muses. All I would say, for the moment, is that statistical conceptions of creativity may not tell us as much as we think they do.

There's much concern nowadays about the disruptive creative potential of AI chatbots. Witness, for instance, recent panic over AI-generated songs in the style of Drake, Rihanna, and Lamar Kendrick. (One of the Drake tunes racked up over half a million streams on Spotify and temporarily fooled at least one music critic.) The tendency to equate creativity with scores on creativity tests has also opened the door to anxiety about AI creativity. Specifically, at least two studies have already looked at AI performance on alternative use tasks, and one of them does give AI a slight edge over humans. I'll discuss these studies next week. Here I just want to suggest that whatever concerns we have about deepfakes and such should not include the fear that AI will become more creative, in the most important ways, than human beings.

This morning I gave ChatGPT the alternative uses brick test. The program was extremely fluent – it generated lots of ideas in a hurry. It was also very elaborative. It didn't just tell me you could exercise with a brick; it noted specifically that a brick could be used as a dumbbell to increase arm strength. On the whole though, its responses didn't seem very novel, which surprised me given the size of its database. Even after prompting it for greater novelty, unusualness, and originality, it mostly churned out ideas cribbed from the internet (bookend, doorstop, planter, hotplate). It also cheated, by giving me some responses that would require many bricks (e.g., constructing a pet house). And, it overlooked a few ideas. The website for This Old House, for instance, notes that one could use a brick to flatten a whole chicken while cooking it. That's pretty novel. (I guess it's useful too?) ChatGPT missed that one.

On the other hand, although I consider myself only moderately creative, it didn't take long to come up with ideas that are useful but much more novel than ChatGPT's. (I emailed five of my ideas and five of ChatGPT's, randomly chosen, to a friend, without noting who came up with each list. My friend agreed that mine were more novel.) For instance, I wrote that you could cut a brick in two and use it as shoes for a snowman. You could glue it to the sidewalk in front of your house to serve as a speed bump for bicyclists. Or (this is a little embarrassing), if it has two holes, you could fasten it to the front of your head at Halloween and pretend to be The Thing.

One might say that what I came up with is novel but not useful. I disagree. As a parent and grandparent, I can see how it might be very useful to make shoes for a snowman, prevent cyclists from running into little people, or entertaining those little people on Halloween. ChatGPT can be taught to value these purposes, but it might not be able to prioritize them on its own. Meanwhile, what a person needs evolves from moment to moment, and creativity follows.

If you assume this kind of fluidity is essential to the creative process, then mainstream tests of creativity are indeed limited – and we don't need to worry about AI outperforming us. Doing well on tests like this would not predict encroachments on what researchers call big-C creativity – i.e., the kind that drives human progress. Those AI-generated songs aren't great. Even if they were, they're not likely to be innovative, given that the goal was to emulate musical styles that individual artists have already forged. (No offense if you're a fan, but is Drake the pinnacle of 21st century musical creativity anyway?) The day that AI composes a song that inspires joy, spontaneous dancing, and gooseflesh, then maybe we should worry. I don't see that happening. Perhaps I'm overly optimistic, but I see AI as eventually supporting rather than supplanting artists.

Next week I'll say more about creativity tests, statistics, AI, and some evidence-based strategies that seem to boost creative thinking. I'll also discuss that new study on whether marijuana makes people more creative.

Until then, thanks for reading!

Appendix: U.S. educational spending

This appendix has nothing to do with creativity. I just wanted to complete my thought about statistical complacency.

David Brooks praised America's investment in its people by noting that we spend 37% more on our students than the average OECD country does. This factoid is misleading in part because, as I mentioned, we're actually below average in the percentage of GDP spent on K-12 education. We're the richest country, but compared to peer nations we spend proportionally less than average on educating our children.

I want to add that even if you focus on absolute expenditures, America gets less for its money than most of our peers do. For instance, among the 38 OECD countries, our graduation rates have been middle-of-the-pack for many years. And, we're somewhat of an exception to the general trend that the more a country spends on education per student, the better their students do on the Programme for International Assessments (PISA).

Since 2000, PISA tests in reading, math, and science have been administered every three years to 15 year olds in nearly 80 countries. Although the absolute dollar value of our educational expenditures exceeds the OECD mean, US students have scored well below the OECD average in mathematics on every administration of the test. In reading and science, we've fared somewhat better but have never cracked the top 10 or matched most of the other countries with comparable spending levels.

More could be said, but you get the idea. Though statistical misrepresentations are common, the one I've called out here is especially disturbing, because at least some of the challenges in American education are linked to insufficient funding for essentials such as teacher salaries. (Also, Mr. Brooks, a generally thoughtful writer, seems to be especially prone to misrepresenting statistical data – see here for a critique of his views on global happiness.)